By: Jerry Bromenshenk, Robert Seccomb, Colin Henderson, David Firth Bee Alert Technology Inc; Missoula, MT

NPR 02/18/2019, Headline: Massive Loss of Thousands of Hives … This article underscores a fundamental problem. There isn’t a system for early detection and rapid reporting of emerging colony health issues, when and where these occur, in the U.S., Canada, and most countries. Bees need the equivalent of the USA’s CDC human influenza updates and mapping. This problem can be solved immediately by just one of the functions of Bee Health Guru; our app for smartphones, where the bees themselves are the guru, indicating colony health by the sounds that they produce.

For 12 years, we have been working towards providing a hand- held device for sensing, analyzing and reporting colony conditions. As with a Star Trek Tricorder™, our bee-sound recording and analysis system uses machine intelligence (AI) to analyze colony sounds. In 2012, we built several hand-held bee recording devices that were big, expensive, and clumsy to use. Smaller, affordable, user-friendly devices with rapid processing and large storage capabilities were unattainable until 2018 when smartphones with facial recognition for security were marketed. Facial recognition uses AI requiring fast processor speeds. It’s a visual counterpart to our AI sound recognition programs. Suddenly, our AI analyses, that had taken several minutes on existing tablets, laptops, and earlier smartphones dropped to seconds!

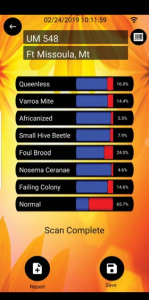

The primary purpose of our Bee Health Guru app is to allow the bees themselves to communicate each colony’s health status. Recordings of colony sounds are made using a smartphone’s internal microphone or microphone port with a slender microphone. Analysis of a sound recording is immediately and automatically performed by our Bee Health Guru AI program. Our algorithms assign the probability that one of eight conditions is exhibited by the colony. A form then appears on the phone’s screen that provides the user the option to visually inspect the colony to confirm its condition, and then save an inspection report. These three actions: (1) Recording colony sounds, (2) predicting the likelihood of specific diseases, and (3) reporting the outcome of colony inspection provide data needed to fine-tune our AI modules and map occurrences of different colony problems. The latter will initially be based on visual inspection reports and eventually should be based on the AI analysis reports alone – no inspection needed as the app’s accuracy improves.

A Bee Health Guru recording takes 30 seconds. My Android 8+ phone analysis for all eight colony health factors takes 12 seconds. Filling out the inspection form takes less than a minute, but visually inspecting a colony does take more time. This last step, colony inspection is critical during the early stages of app use. It’s where app users can become citizen scientists and contribute to our ability to accurately decode colony sounds.

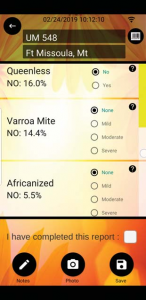

Following recording and generation of an analysis report by the app, the user is asked to visually inspect the colony to either confirm or reject the app’s sound analysis. A click of a button reveals a simple drop- down inspection form. The report is filled out at-the-hive, takes only a few keystrokes to complete, and then a click of another button uploads the report, as well as any notes and photographs, to our Cloud-based data archive. The app automatically creates a copy of the recording, the AI colony health analysis report, and adds date, time, and GPS location. Resultant electronic records have safeguards for protecting data privacy, confidentiality, and security of beekeepers (i.e., names and hive or apiary locations) and the patients, bees who may be sick (e.g., locations).

Once all of this information is posted to our Cloud-based recording, analysis, and inspection data repository, we can accomplish two tasks: (1) Refining, re-training and upgrading the AI modules for each health factor analysis, and (2) Mapping of colony health trends over time and geographical space to share with citizen scientists and anyone else interested in bee health.

The Start Screen appears as soon as the user taps the Bee Icon to bring up the installed app. Insert phone’s microphone into hive entrance up to the bull’s eye or plug in external microphone. Let bees settle just a bit, then touch the red center of the bull’s eye. The App will start, record for 30 seconds (can be changed to 60), run the AI analyses, and end up showing the Analysis report. The longer the red bar for a factor, the greater the probability that there’s a problem. (Note, we’ve asked our app developer to change the word Normal (i.e. Normal Colony) to Abnormal – people don’t intuit that red is the warning of a problem. Assuming that the user then moves to Inspection to reject of confirm each analysis factor, the Inspection Form drops down with a few questions. Tapping the ? for any factor brings up an explanation of the criteria. The App then reverts back to the start (Upload Screen). Note the Cloud with a number in lower right corner. The number is the number of recordings/analysis reports/inspection reports waiting to be uploaded. At the top of the screen is the GPS location for the last colony scanned. At any point, when cell or wireless is available, tapping the bee icon near bottom of a screen links the user to our www.beehealth.guru web page for further information, comparing notes with other users, etc. It’s where our North American Bee Associations Directory will be made available (when the class finishes its project) and it’s where we will probably post Bee Colony Health Updates and Maps. It only takes a few key strokes to record. Much of the work is done automatically by the phone, such as grabbing time, date, GPS location, hive ID (if a barcode is used – just touch a button to use camera to take a photo). There’s no input of user’s name, type of phone, etc. Our overall goal is to make this as simple to use as possible – people have sticky hands or gloves – need to keep button clicks typing to a minimum.

Refining the App

Unlike other honey bee acoustic scanning and monitoring systems (mainly from Europe), our programs do far more than look for simple frequency markers of overall colony noise and do not rely on the user to interpret sonograms. Our custom AI’s assess a broad and complex spectrum of sound Inspection Screen Bee Health Smart Screen attributes, far faster and better than any person could accomplish. This standardizes the analysis. Results are comparable from colony to colony without observer inaccuracy or bias, and no training of the observer is required.

For example, if the app reports a high probability that a colony lacks a laying queen, the inspection report should either prove or disprove that analysis result. By having hundreds or thousands of examples provided from users of our app, we can take all of the recordings that the app got wrong, all of the recordings that the app got right, and re-train the AI. It’s an iterative process that we know will refine and improve app accuracy.

Mapping Health Trends

As soon as visual colony inspection information is secured, automated updates and posting of incident maps can be readily produced using off-the- shelf interactive data visualization software. Posted maps will provide sufficient resolution to identify, for example, an outbreak area of Varroa mites, but will not pinpoint the actual location of affected colonies.

To enact the health trend mapping part of our app, we need beekeepers with smartphones, Android or iPhone, to download our app, inspect colonies, and in a timely manner, post the reports to our Cloud site. Our app stores all recordings and reports on each phone until the reports can be uploaded. Cellular service in the beeyard is not necessary. Obviously, we hope that every user also records colony sounds so that we get recordings and AI analysis results, but just the colony inspection reports alone can be used to generate bee health maps.

The inspection and reporting part of our app, by itself, should revolutionize bee health protection – early alerts, based on visual inspections, as soon as the reports are uploaded. Any beekeeper with a smartphone, anywhere around the globe, enabled to submit reports or bee colony health problems as they are discovered. Initially, we have limited the health reports to eight major factors like Varroa mites, foulbrood, nosema, queen status, and other aspects of overall colony condition.

All of this is based on relatively recent progress in insect and bee ethology (behavior). An excellent overview appears in Insect Sounds and Communications (Dropoulous and Claridge, 2006). We now know that in addition to the well-known symbolic dance language, bees also communicate via sound, using both vibratory and airborne-sound signals.

We can record these signals by laying either a phone’s built-in microphone or a slender, external microphone on the bottom board of the hive. Our discovery, formalized in acoustic monitoring and recording system patents, published in the U.S. and Canada, (Bromenshenk et al., 2015) was that we can decode bee-produced signals to identify colony exposures to hazardous and toxic chemicals, including often the category of chemical, as well as discern a variety of colony health conditions, and even rank the level of infection or infestation of bee diseases and pests. Furthermore, bee sound or song repertoires are far more complex than can be perceived by the human ear, with frequencies beyond our audible range and additional components such as amplitude, pulses, shifts, and other related signals all contained in the airborne sound and vibratory spectrum.

Which raises the question, can bees perceive these sounds and vibrations that they produce? Bees and many other insects have long been considered to be deaf to airborne sounds. Around 1940, Hansjochem Autrum and associates demonstrated that many insects perceive minute substrate vibrations and that some insects have hair-like structures that can function as sound velocity and airborne sound receptors. But, it wasn’t until the 1990s that scientists found evidence that flies and bees appear to be able to perceive airborne sounds by flagellum in the Johnston’s organ of the antennae.

Modern capabilities f o r recording, manipulating, playing back, and analyzing the acoustic signals of honey bees and other insects have made acoustic behavior an advanced and active area of insect ethology. We are just now beginning to realize that the bee dance movements are only one part of a bee colony’s communications. Communication processes of social bees that coordinate hundreds or thousands of individuals in a colony are fascinatingly complex. We have a means of tapping into colony communications.

Why do we need to fine-tune and calibrate? When using high-end recorders and desktop computers, the accuracy of detection for the eight included factors ranged from 86-98% detection, based on over a decade of our own intensive research. Our AI programs learn and improve when we’ve got real recordings from colonies with specific visible health factors. We now need to know which phones provide an accurate recording and which do not. We’ve also learned the bees have accents. New Zealand bees produce somewhat different sounds than North American bees, and bees from the U.K. are different from either America or New Zealand.

Launched in the Fall of 2017, we anticipated that it would take three months to produce and beta test our Bee Health Guru app, it took two years! It’s not a finished product, but we are close to the finish line. That’s where lots of beekeepers and bee colonies are needed. It’s what we intend to do this Summer – calibrate the sound and vibration signal decoding!

This Spring (April) we will launch our app to the public on Kickstarter. We hope that every beekeeper in the world with a smartphone will support this launch and then download, use our app, and upload the results. That has the potential to generate a massive data set. To properly address that rich resource, we need to be able to have a team ready and willing to process the initial data, re-train the AIs, and enact regional bee health mapping – for the U.S., for Canada, for Europe, for New Zealand, for Australia – any English speaking nation. And then we need to produce versions of the app in other languages so as to truly go global.

References:

- Drosopoulos, S. and M.F. Claridge. 2005. Insect Sounds and Communication: Physiology, Behaviour, Ecology, and Evolution. 32 Chapters. CRC Press, 553 pp.

- Bromenshenk et al. 2015. Bees as Biosensors: Chemosensory Ability, Honey Bee Monitoring Systems, and Emergent Sensor Technologies Derived from the Pollinator Syndrome. Biosensors 5(4), 678-711; https://doi.or/10.3390/bios5049678.